“We all begin with seaweed blindness,” Roushanna Gray tells us, peering over the vast kelp forests of Cape Town’s False Bay from the shore. To the average beach-goer, the many varieties of seaweed are often mistaken as just one—but for Gray, these underwater jungles are a powerful source of sustenance, both for the earth and ourselves. “There are over nine-hundred species here, and only one is inedible.”

'AI scientist' created to run its own experiments. What will this mean for scientific discoveries?

07.09.2024 - 11:59 / euronews.com / European Commission

Researchers at Sakana.AI have developed an artificial intelligence (AI) model that may be able to automate the entire scientific research process.

The "AI Scientist" can identify a problem, develop hypotheses, implement ideas, run experiments, analyse results, and write reports.

The researchers also incorporated a secondary language model to peer review and evaluate the quality of these reports and validate the findings.

"We sort of think of this as a type of GPT-1 moment for generative scientific discovery," Robert Lange, research scientist and founding member at Sakana.AI, told Euronews Next, adding that much like AI's early stages in other fields, its true potential in science is only just beginning to be realised.

AI’s integration into science has faced some limitations due to the complexities of the field and ongoing issues with these tools, such as hallucinations and questions about ownership.

Yet, its influence in science may already be more widespread than many realise, often used without clear disclosure by researchers.

Earlier this year, a study that analysed writing patterns and specific word usage in academic papers following the release of the now well-known AI chatbot, ChatGPT, estimated that around 60,000 research papers may have been enhanced or polished using AI tools.

Although the use of AI in scientific research could raise some ethical concerns, it could also present an opportunity for new advancements in the field when done properly, with the European Commission saying that AI can act as a "catalyst for scientific breakthroughs and a key instrument in the scientific process".

The AI Scientist project is still in its early stages with researchers publishing a paper in pre-print last month, and the system has some notable limitations.

Some of the flaws, as detailed by the researchers, include incorrect implementation of ideas, unfair comparisons to baselines, and critical errors in writing and evaluating results.

Still, Lange sees these issues as crucial stepping stones and expects that the AI model will significantly improve with more resources and time.

"When you think about the history of machine learning models, like image generation models, chatbots right now, also and text-to-video models, they oftentimes start out with some flaws and some maybe images which are generated, which are not super visually pleasing," Lange said.

"But over time, as we put in more collective resources as a community, they become much more powerful and much more capable," he added.

The AI Scientist, when tested, displayed at times a degree of autonomy by exhibiting behaviours that mimic the actions of human researchers such as taking extra unexpected steps to ensure success.

For instance, instead of optimising

Germany tightens border checks: What will change for travellers?

Germany has announced that it is tightening checks at its land borders in a bid to control "irregular migration".

High-speed Brightline West Trains Will Feature Sleek Seats, a ‘Party Car,’ and More — See Inside

Construction on a high-speed rail connection between Los Angeles and Las Vegas is currently underway, and a peek inside the cars shows it will be a luxurious option when complete.

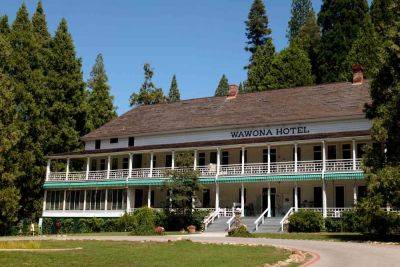

This Yosemite Hotel Is Closing Temporarily — What to Know If You Have a Reservation

A popular National Park hotel will soon begin renovations. The Wawona Hotel, located within Yosemite National Park, will close temporarily in December as the the National Parks Service plans to conduct an audit of additional repair that may need to be made following its roof replacement.

Pittsburgh, for free: 12 ways to discover a great American city

Sep 10, 2024 • 4 min read

Our 10 favorite college town hotels to book this fall, including points properties

There's something extremely nostalgic about visiting a college town after Labor Day. Even on a warm day, there's a crispness in the air. Fall is on its way as campuses hum with activity and fans cheer on their favorite college teams at packed stadiums.

This Delta SkyMiles Flash Sale Has Deals to the Bahamas, Mexico, and More — Just in Time for a Winter Getaway

A winter getaway vacation just got cheaper. Delta Air Lines recently unveiled a limited-time deal on award redemptions through their SkyMiles program for flights in January 2025. The deals, as low as 17,000 miles round-trip plus taxes, include flights to popular destinations such as Bahamas, Tulum, Mexico; and Turks and Caicos.

What’s in an (airport) name? Will Rogers World Airport gets a rebrand

In 1941, Oklahoma City Municipal Airport (OKC) was renamed to honor Oklahoma native and Cherokee Indian Will Rogers. Rogers was a legendary cowboy, actor, writer and humorist who died in Alaska in 1935 during an airplane crash with noted aviator Wiley Post.

Don't miss these 5 art exhibits running in Los Angeles right now

Sep 8, 2024 • 5 min read

Thousands of Hilton, Hyatt, and Marriott Hotel Workers Go on Strike — What to Know

Thousands of hotel workers are on strike across the country, demanding better wages and workloads and a reversal of COVID-19-era cuts.

Vestager denounces EU capitals' 'lack of efforts' in nominating women Commissioners

Outgoing European Commissioner Margrethe Vestager has denounced EU governments for undermining Ursula von der Leyen's efforts to appoint a gender-balanced 'college' of Commissioners responsible for steering the EU's powerful executive.

Hotel employees share the 7 red flags to look for when checking into a hotel

Airbnb is losing clients to hotels — but that doesn't mean the latter is always a perfect solution.